TORONTO -- Apple has unveiled a slew of features designed to make their products more accessible for people with disabilities, ranging from one-handed gesture controls for the Apple Watch to eye-tracking support for the iPad.

"We believe everyone should have the tools they need to change the world. Accessibility is a fundamental right, and we’re always pushing the boundaries of innovation so that everyone can learn, create and connect in new ways," Apple CEO Tim Cook tweeted on Wednesday.

Gesture-based accessibility controls are among the new features for the Apple Watch, which the company calls AssistiveTouch. Individuals with limb differences or who are otherwise unable to use the Apple Watch's touch screen can instead make clenching and pinching motions with their hand to control their watch.

For example, an AssistiveTouch user can clench their fists two times to answer a call or pinch to navigate menus. Apple Watch users can also activate a motion-controlled cursor that allows them to scroll and navigate their watch.

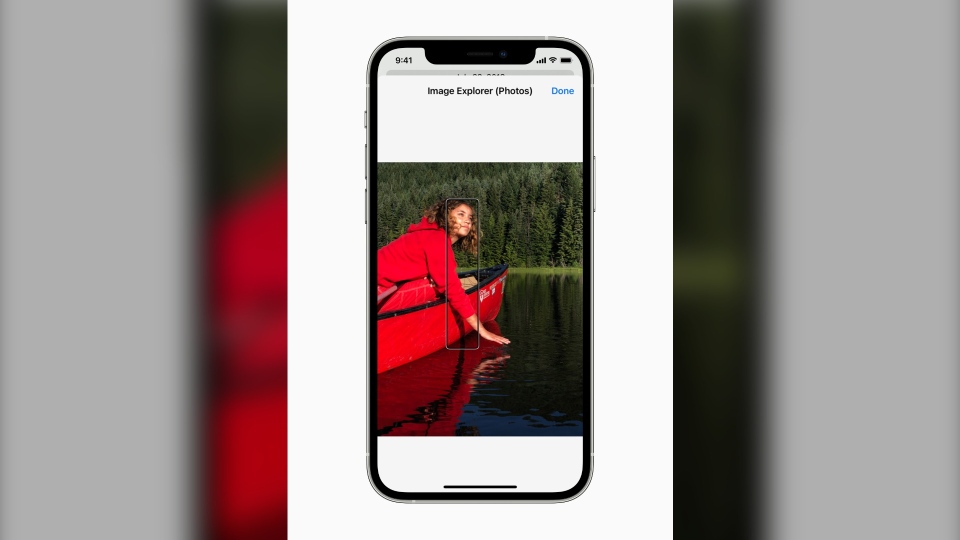

iPhone users who are blind or those with low vision have long relied on Apple's VoiceOver feature. With VoiceOver, users can slide their finger along the screen and a voice will read the icons or text that their finger is on.

Now, Apple is enhancing VoiceOver, enabling iPhones to read out automatically generated image descriptions. VoiceOver users can now slide their finger along a photo and hear descriptions about specific objects within a photograph.

The iPad is also getting support for eye-tracking devices, allowing users to control their iPad with a cursor that tracks their eye-movements.

Apple is even launching new Memoji customizations, allowing users to fit their avatar with oxygen tubes, cochlear implants and soft helmet for better representation.

Apple also announced support for bi-directional hearing aids, which can be used for video calling. Users who are deaf and hard of hearing can also allow the iPhone to automatically adjust frequency levels based on audiograms, which are charts that show results of the user's hearing test.

For individuals who are neurodivergent and need help focusing, such as people with autism or attention deficit hyperactivity disorder, Apple is adding a feature that lets iPhone users play constant background sounds, such as the sound of rain or the ocean, to help tune out unwanted environment noise. These sounds will play in the background even if the device is also playing a video or a song.

The company says these features will be available later this year through a software update. These announcements also coincided with Global Accessibility Awareness Day that was marked on Thursday.

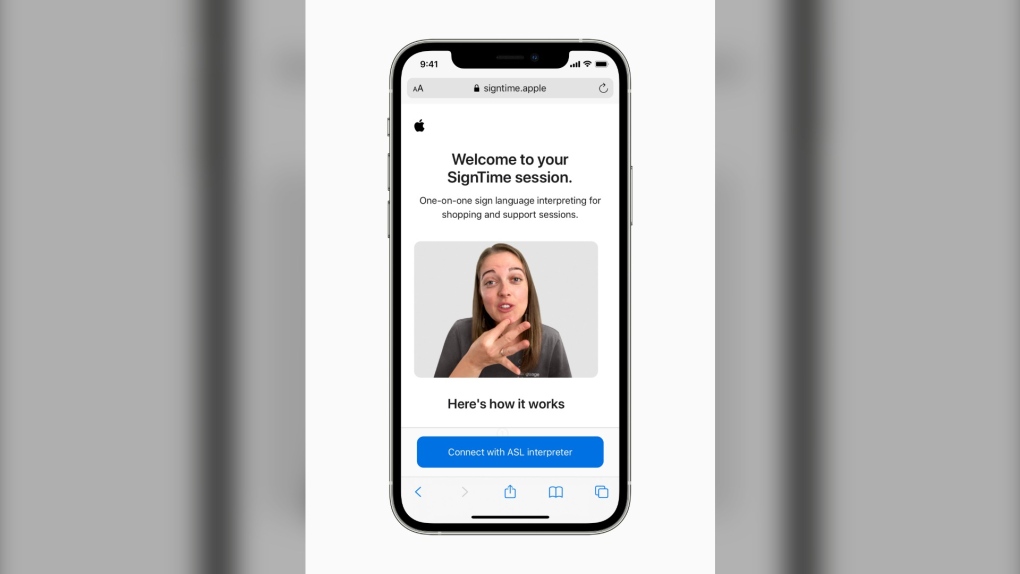

On Thursday, Apple also launched SignTime, a service that instantly connects customers with a sign language interpreter on their phone while communicating with customer service representatives at their Apple Store locations. This service is first launching in the U.S., U.K. and France. While the service is not available in Canada yet, Canadian customers seeking a sign language interpreter at an Apple Store can still make that request via email in advance.